By Hans van der Meij and Marie-Louise Flacke

Abstract

Purpose: There is a need for error information in software documentation and training. Audits show that this need is typically not sufficiently addressed. This article addresses the issues of how and why errors should be part of design.

Method: A literature review summarizes what research has to say about the design and effectiveness of error-inclusive software documentation and training.

Results: Three main types of error-inclusive approaches are distinguished: (1) In an error-tolerant approach, error prevention is important, as is the presence of error information when needed. Minimalism is one representation of this approach. Minimalist theory proposes a training wheels technique to prevent error and just-in-time error information to support error management. (2) In an error-induced approach, the training arrangement almost guarantees that users engage in error handling during training. Its best-known representation is Error Management Training (EMT). Key features of EMT are the arrangement of an exploratory mode of task engagement during training and the communication that errors are opportunities for learning. (3) In an error-guided training (GET) approach, regular and corrective instructions are mixed. The main idea is that uses benefit from instructions for task completion that alternate between expositions of correct and incorrect solution methods. Research on error-inclusive approaches shows that the training tends to be slightly less efficient but that, after training, users are better at performing regular tasks and have learned more error-management skills.

Conclusion: An error-inclusive approach to software documentation and training yields better learning outcomes and moderates user frustration with errors.

Keywords: error-inclusive; error management; software documentation and training

Practitioner’s Takeaway

Software documentation and training tends to focus too much on an error-exclusive approach.

Three types of error-inclusive approaches (error-tolerant, error-induced, and error-guided) provide food for thought on why and how errors can be treated in software documentation and training.

Research generally reveals that an error-inclusive approach yields higher learning outcomes and contributes

to a more positive user attitude toward error.

INTRODUCTION

People are prone to make mistakes. No matter how carefully they intend to behave, errors are a natural by-product of active exploration and learning (Katz-Navon, Naveh, & Stern, 2009). By errors, we mean unintended deviations from plans, goals, or adequate feedback processes, as well as incorrect actions that result from lack of knowledge. In contrast, the term troubleshooting, with which error management is easily conflated, refers to software abnormalities such as bugs, incompatibilities, or component failures (see Farkas, 2010, 2011).

The abundant occurrence of error should induce technical communicators to design error-inclusive software documentation and training. However, error-exclusive approaches have dominated the field. Two consecutive audits of software manuals, covering a production period from 1980 until 2008, illustrate this. In 1996, van der Meij conducted a systematic analysis of error information in software documentation. The study showed that error information appeared infrequently, as one-third of the manuals included no error support at all. Only 19% of the tables of content and 31% of the indexes included a keyword that signaled the location of error information in the manual (van der Meij, 1996). About ten years later, van der Meij, Karreman, and Steehouder (2009) published a follow-up study that showed modest improvements for error presence and accessibility. In this study 21% of the table of contents and 38% of the indexes of the manuals included a keyword that referred to the presence of error information. In short, these inventories signaled a lack of widespread adoption of an error-inclusive approach in software documentation and training.

An important argument given in favor of an error-exclusive approach is that it best serves the user’s main goal, which is to accomplish meaningful tasks. Users should be able to spend most of their time learning how to effectively accomplish regular tasks rather than on error-handling. To best use what little time the user is willing to spend on documentation and training, the information that is presented must concentrate on regular tasks, the argument goes. Research has investigated the validity of this argument by looking at training time, regular task performance, and error-management. In these studies, documentation with or without error information is compared. The following summarizes the outcomes.

Some studies have reported an increase in training time for an error-inclusive approach (McLaren, van Gog, Ganoe, & Karabinos, 2016), but other studies have found no differences (e.g., Kopp, Stark, & Fischer, 2008; Struve & Wandke, 2009). In addition, studies have generally reported more task completion during training for error-exclusive approaches, but sometimes error-inclusive approaches have yielded equal outcomes for task performances during training (McLaren et al., 2016). In contrast, studies have generally reported higher learning outcomes and better task performances after training for error-inclusive approaches (e.g., Adams et al., 2014; Chillarege, Nordstrom, & Williams, 2003; Durkin & Rittle-Johnson, 2012; Gardner & Rich, 2014; Heimbeck, Frese, Sonnentag, & Keith, 2003; Wood, Kakebeeke, Debowski, & Frese, 2000). Finally, error-inclusive approaches have been found to better address the users’ emotional states in dealing with error, and they have reported increased knowledge of error and improved error correction skills more than error-exclusive approaches to documentation and training (e.g., Cattaneo & Boldrini, 2017; Durkin & Rittle-Johnson, 2012; Heemsoth & Heinze, 2014; Lazonder & van der Meij, 1995).

In short, the literature provides ample support for adopting an error-inclusive approach to software documentation and training. This article concentrates on the three main types of error-inclusive approaches that exist in that field, namely error-tolerant, error-induced, and error-guided approaches. The description of their rationale, design features, and findings from research should benefit technical writers who would like to create error-inclusive software documentation and training.

AN ERROR-TOLERANT APPROACH

In an error-tolerant approach, errors are seen as interfering with the user’s regular task completion. Therefore, error prevention is an important aim. At the same time, it is acknowledged that not all user mistakes can or should be avoided or ignored, and, therefore, there must be some built-in support for error management. Minimalism is one theory that has consistently advocated an error-tolerant approach to software documentation and training. The minimalist method of supporting error recognition and recovery rests on two pillars:

- the use of training wheels

- just-in-time error information

Training Wheels (TW) Design

A training wheels design makes it easier for the user to learn to use a new product. It entails blocking options from an interface, usually by greying these out. In this way, the user can work with a seemingly intact interface. The design creates consistency in the organization of the interface. This is preferable to an interface that displays only a limited set of menu options.

There are two main reasons for employing a training wheels design. One concerns meaningfulness. The restrictions block options that have no meaning for the user. The other reason is that it shields off the user from making certain errors. A training wheels design can restrict the options that the user can activate in order to prevent mistakes.

There are three main ways of reducing the systems options available to the user (Carroll, 1990). One type of interface restriction focuses on maintaining meaningfulness. Certain interface options are made unavailable because they do not make sense considering what can be done. For instance, it does not make sense to print something if no document for printing is available. Another type of interface restriction concerns blocking of advanced system functions. The main role of this variant is to ward off deep-seated options that are meaningless for the user. The third and most common way of using a training wheels design is to create an interface that prevents tricky errors from occurring. This variant blocks access to options for which error recovery is too hard for the user.

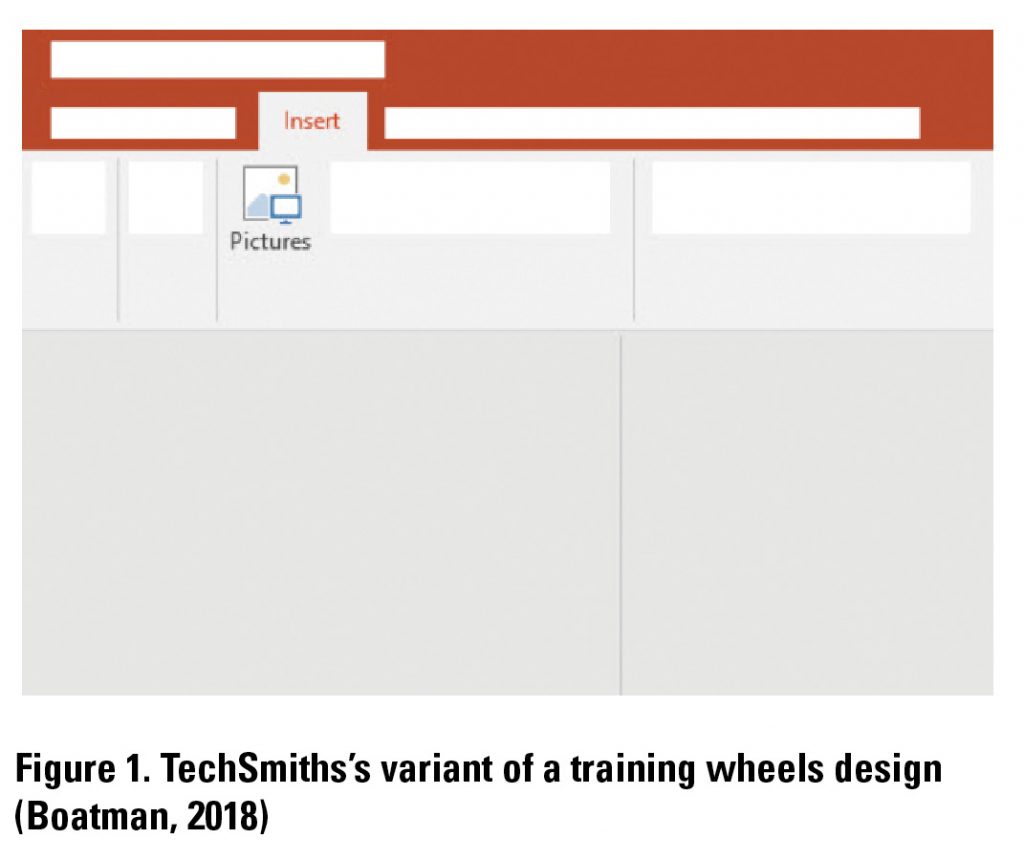

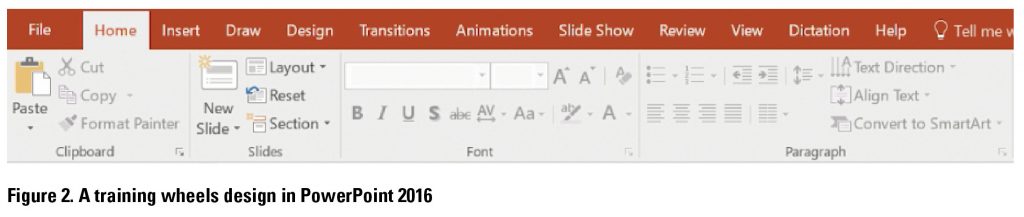

TechSmith recently introduced a variant of the training wheels design (see Figure 1). In this simplified user interface, all the non-relevant information is represented by shape rather than greyed out. This design falls between a greyed-out and a partial menu presentation. Figure 2 illustrates a permanent variant with a training- wheels design that blocks out meaningless options. Most training wheels designs consist of a temporary scaffold that loses its relevance, or becomes obstructive, over time. Therefore, there should be fading; the removal of blocked options is best done gradually.

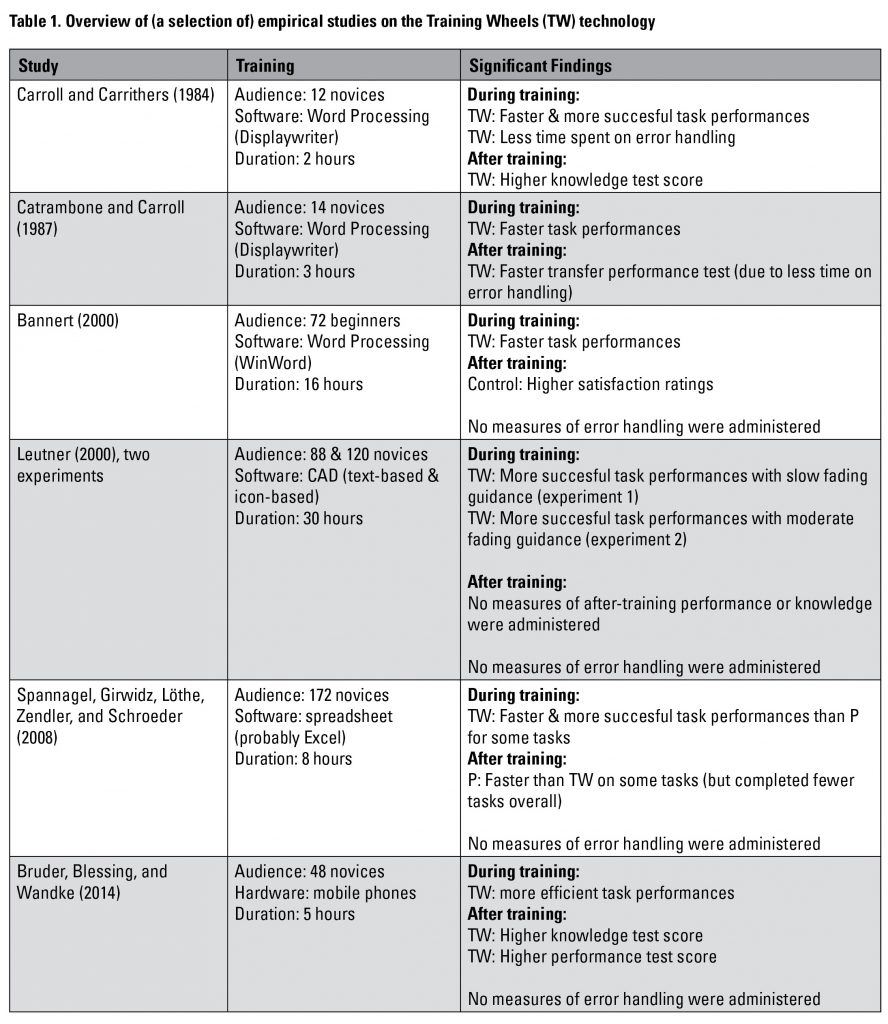

A limited set of studies on training wheels has been conducted, and the findings have been somewhat mixed. Table 1 presents a selected overview of the research. What is striking in the research is that training time has ranged from relatively short to relatively long in duration, and also that the number of participants involved in the training varied from meager to extensive. By and large, a training wheels design yields more succesful task completion during training and reduces training time. Effects after training have been reported infrequently and mixed outcomes have been found.

Only the early studies from Carroll specifically measured error handling. These studies found the use of training wheels beneficial for error management skills development. The absence of measures on error handling is perhaps not surprising because the training wheels design is meant to prevent error. However, it seems obvious that, under a training wheels regime, errors still occur and should be within the user’s grasp. In fact, a training wheels technology is arguably the best testbed for an error management training. The full potential of a training wheels technology for such training remains yet to be studied vigorously.

Takeaways of Training Wheels (TW) Design

- A training wheels design can ward off users from meaningless functionality and can prevent serious error to occur during training.

- The design of training wheels often consists of creating a lockout interface. The restrictions should, in most cases, be faded as users become more experienced.

- Research has generally shown that training wheels increase the effectiveness and efficiency of a training.

- A training wheels design seems optimally suited for error management training.

Just-In-Time (JiT) Error Information Design

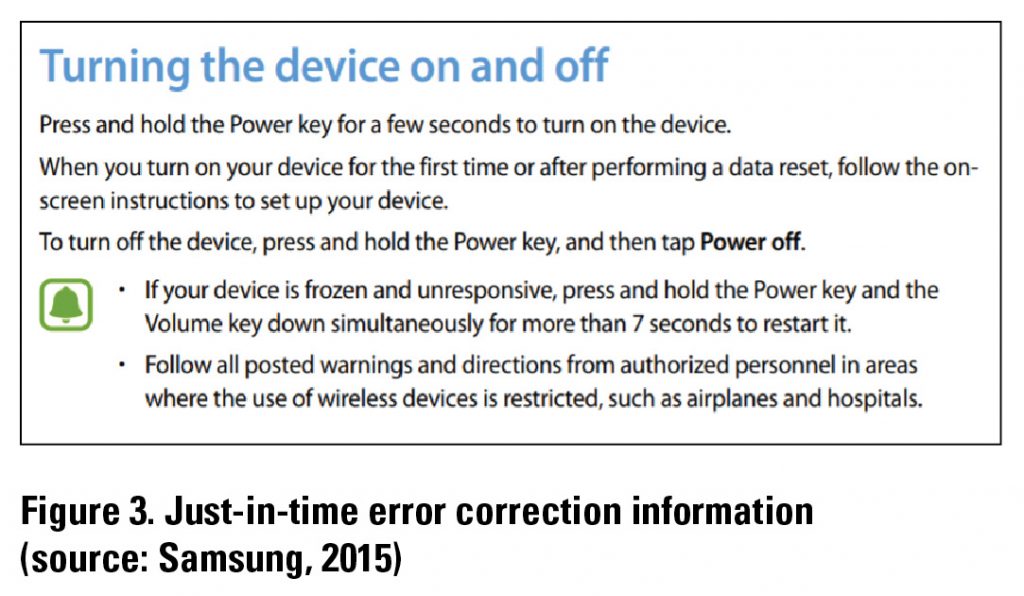

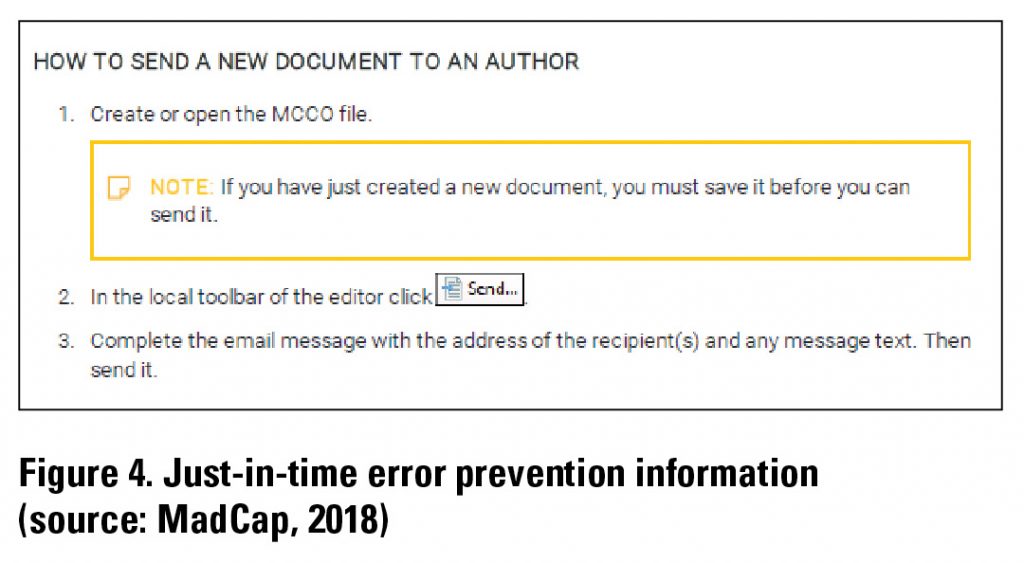

A just-in-time design for error information helps the user in handling error at the best possible moment, namely, immediately after its occurrence. The design hinges on inserting error information in the documentation where the user is likely to have made a mistake. Figure 3 illustrates an application of a just-in-time presentation for error handling. Figure 4 shows the use of a just-in-time presentation for preventing errors to occur. In both examples, the error information stands out from the regular instructions so that it can easily be skipped.

The desirability of a just-in-time presentation of error information is advocated by the Four Components Model (van der Meij, Blijleven, & Jansen, 2003; van der Meij & Gellevij, 2004). There are several reasons why error information is preferably positioned in close proximity to error-prone actions (Lazonder, 1994; van der Meij & Carroll, 1998). First, a just-in-time presentation helps the user in catching an error early on. This facilitates error detection and prevents error entanglement, as there is a tendency for errors to accumulate after the user has made an initial mistake. Second, a just-in-time presentation avoids the need for contextualization. When error information is separated from the moment, or source of the mistake, clarifying contextual information is often needed. A just-in-time presentation does not require such information and, therefore, can be shorter, and more to-the-point. Third, having a mixture of regular and just-in-time error instructions moderates the user’s negative emotional state that is usually associated with error. The frequent inclusion of error information conveys the view that mistakes commonly occur and that the user is not to be blamed for committing them. The user’s habituation to errors can be further enhanced by including soothing statements such as “don’t worry,” or “this mistake occurs regularly.” A fourth argument in favor of a just-in-time presentation is that it can be conducive to error simulation. Research has revealed that a just-in-time presentation of error information also stimulates user to try out making a mistake. That is, after a flawless task performance, users occasionally (14% of all opportunities) retrace their steps and make a deliberate mistake in order to engage in error handling (Lazonder & van der Meij, 1995). Such explorations signal that the user is motivated to learn more about the software and trusts the documentation for guidance.

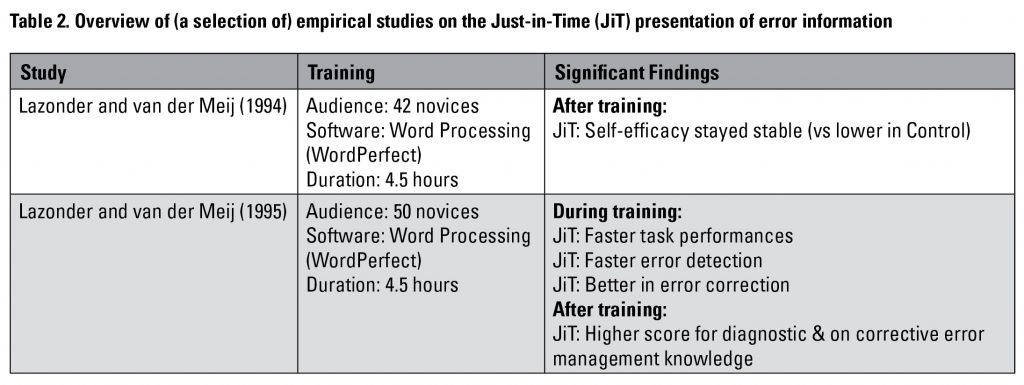

Research on a just-in-time presentation of error information is rare (see Table 2). Two consecutive studies by Lazonder and van der Meij (1994, 1995) showed that a just-in-time presentation enhanced efficiency during training, kept self-efficacy high, and yielded better error management knowledge and skill after training. Pratt (2000) compared the more common “error sections” approach to a just-in-time design for online help. She found no advantages of the latter, which was ascribed to the fact that the users were advanced beginners rather than novices.

There is more empirical support for the effectiveness of a just-in-time presentation of constructive information. For instance, two experiments revealed that a just-in-time presentation of codes for creating a Computer Numerically Controlled program yielded significantly more accurate task performance and better learning outcomes than did a non- just-in-time presentation (van der Meij, 2007). Also, the influential 4C-ID Model for complex learning advocates a just-in-time presentation as an important means to convey supportive procedural information to students (van Merriënboer & Kester, 2014; van Merriënboer, Kirschner, & Kester, 2003).

Takeaways of Just-in-Time (JiT) Error Information Design

- A just-in-time presentation of error information can serve several functions: facilitate error management, prevent error entanglement, yield compact help, normalize error moods, and stimulate error exploration.

- A just-in-time design means that error information is presented immediately after a (likely) mistake.

- Research on a just-in-time presentation of error information is rare. It suggests that this design can strongly enhance error management skills. There is more support for the effectiveness of a just-in-time presentation of conceptual information.

AN ERROR-INDUCED APPROACH

In an error-induced approach, users are expected to become engaged in error handling during training, but this experience is neither guaranteed nor uniform across users. The view on errors is that they are important for learning and that error handling must be supported. However, this does not mean that errors should deliberately be created so that all users experience them similarly or, for that matter, that there will necessarily be any errors during training. Rather, the occurrence of errors is facilitated by the design of the documentation and training for software use. An error-induced approach applies more to instructor-led training than to documentation.

Possibly, the best-known example of an error-induced approach is Error Management Training (EMT). There are several features that characterize the EMT approach to error. One key aspect is that documentation and training revolve around an exploratory mode of task engagement. After a brief, general introduction, users are given task assignments that quickly become more difficult. There is limited support for completing them, as users are merely provided with overviews of the necessary commands in task execution instead of step-by-step instructions for task completion. This training arrangement yields ample opportunities for users to make mistakes. Another feature is that, just as for regular tasks, users must first try to deal with an error on their own. The instructor provides help only after the user has engaged with the mistake for a limited amount of time. Another EMT feature is that the communication about errors describes these as “wonderful” opportunities for learning. Users are explicitly encouraged to make errors, to perceive them as common occurrences, and to learn from them: “Errors are great because you learn so much from them” (Keith & Frese, 2005, p. 677). EMT studies regularly provide the user with a handout containing four heuristics for dealing with error (see Figure 5). An element that is occasionally found in EMT approaches is a “forced-error session” at the end of the training. This session revolves around extremely complex tasks or presents the user flawed tasks that must be corrected.

An EMT approach generally has two main goals. One is to improve learning from the training. After training is completed the user should be more capable of performing trained and untrained (transfer) tasks. In addition, the user should have developed a deep-seated understanding of the program. To achieve these aims, the user should encounter errors during training because errors challenge the user’s existing knowledge and contribute to mental model development. The other main aim is that the user’s stance toward error becomes more positive. Instead of perceiving errors as annoying and frustrating, EMT tries to convice the user that errors are benefical for learning. In addition, the communication about errors should reduce the user’s frustration and negative mood that usually accompanies the occurrence of error.

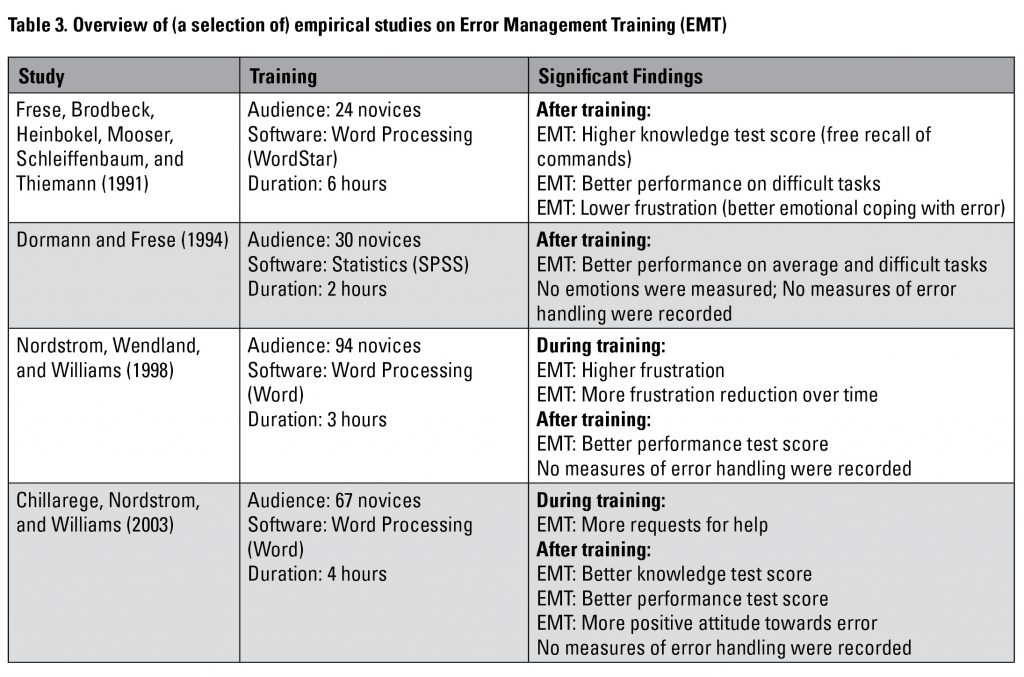

Most of the empirical research on EMT was conducted between 1990 and 2007, culminating in a meta-analysis in 2008 by Keith and Frese, two leading EMT researchers. Table 3 presents a few individual studies on EMT. Because a meta-analysis effectively summarizes the outcomes for EMT for a variety of users, contexts, and software programs, our description of empirical results concentrates on the findings from this article.

Keith and Frese (2008) found 24 empirical studies on EMT of which a large majority involved software training (e.g., word processors, spreadsheets, presentations). The study tested four hypotheses.

One hypothesis concerned the expectation that EMT is more effective than error- avoidant (control) approaches only in post-training tests. The rationale is that EMT users learn more about a program during training because they engage in error handling, that these insights take time to develop and therefore decrease task successes during training. This hypothesis was confirmed. There were no significant differences between conditions for task performances during training, but a significant effect favoring EMT was found on post-training tests.

Another hypothesis stated that EMT yields especially strong effects on post-training transfer (novel) tasks. The reasoning behind this hypothesis is that EMT more strongly contributes to fundamental understanding, to building a mental model, than does an error-exclusive training. The findings supported this hypothesis. The EMT condition did better than the control on trained tasks, but the effect on transfer tasks was substantially stronger.

A third hypothesis stated that the effectiveness of EMT depends on the provision of clear feedback on task performances. This hypothesis was not confirmed. EMT conditions merely tended to be stronger than the control condition for tasks with clear feedback.

The fourth hypothesis stated that the effectiveness of EMT depends on the independent contribution of both active exploration and error management instructions. This hypothesis was confirmed. In other words, EMT’s support for error handling alone already significantly and positively affected task performances after training.

One feature that is notably absent in the meta-analysis is an assessment of the influence of EMT on emotional states and attitudes toward error. Early studies on EMT had reported positive effects on these measures (see Table 3). In this respect, the recent study of Steele-Johnson and Kalinoski (2014) provides further support for the view that error framing can be important both for the stance toward error and task performances. The study compared positive versus negative error framing in a software-based planning task. In positive error framing, people were encouraged to detect errors and to perceive them as a valuable contribution to learning (e.g., “it is okay to make mistakes” and “errors are a natural part of the learning process”). Positive error framing is more neutral than in EMT. Error occurrences are not praised, but just as in minimalism, such moments are described as common and to be treated as such. In negative error framing, people were also encouraged to notice errors, but they were told to avoid them, as they were a sign of inefficiency or failure to follow instructions (e.g., “you want to avoid errors at all costs” and “errors force you to work inefficiently”). The findings showed that error framing significantly affected metacognition, with positive framing yielding more activities during training such as planning and monitoring. Error framing also significantly affected self-efficacy, with positive framing contributing directly to stronger self-efficacy and indirectly to emotion control and performance success.

In summary, empirical studies contrasting EMT with error-exclusive approaches generally show that slightly fewer tasks are completed during training but that this is offset with significant advantages for cognition and motivation after training. A clear-cut advantage of EMT for the development of error management skills (i.e., detection, diagnosis, and correction) has not been sufficiently systematically investigated, however. For instance, while several studies report having presented the EMT leaflet (see Figure 4), none of these studies gives any insights on the actual use of the four heuristics during training.

Takeaways of Error Management Training (EMT)

- An error-induced approach serves to enhance the user’s fundamental understanding of a program and mitigate error frustration.

- The best-known approach to error inducement is Error Management Training (EMT).

- EMT tries to stimulate errors to occur by creating a training that confronts the user with very complex tasks in combination with limited task performance support.

- EMT provides a leaflet with four heuristics for error management.

- EMT is outspoken in its communication to

users about errors. Errors are described as wonderful opportunities for learning and

hence should be cherished. The communication should reduce frustration. - A recent study on positive error framing (less outspoken than EMT) points out that communicating that errors are common can be helpful for learning and motivation.

AN ERROR-GUIDED APPROACH (GET)

In a Guided Error Training (GET), users are confronted with correct as well as incorrect solutions to task problems. The mixture serves to develop a deeper understanding of a problem. In GET, error management is built into the design; GET ensures that the user always engages with error during training. This approach lends itself best to an instructor-led training but can be applied to documentation as well.

GET follows a classic instructional design paradigm in which instructions precede task practice. The support in GET primarily comes from worked examples. A worked example is a perfect (didactic) model of task performance (e.g., Atkinson, Derry, Renkl, & Wortham, 2000; Renkl, 2014). The prototypical worked example consists of an expert explanation that accompanies step-by-step information on how to solve a problem. Initial research on worked examples concentrated on flawless task performances, but now there is an increasing tendency to combines these with worked examples of flawed solution attempts (e.g., Durkin & Rittle-Johnson, 2012; Große & Renkl, 2007; van Gog, 2015). The error instructions in GET revolve around common errors in task performance or they address misconceptions. The user is attended to the error information by flagging the mistake, giving expert commentary, and by prompting the user to compare correct and incorrect solution methods. The latter is especially important for novices who might otherwise not benefit from the error information (e.g., Durkin & Rittle-Johnson, 2012; Struve & Wandke, 2009).

A key argument for the adoption of GET is that drawing attention to incorrect solutions stimulates the user to think more deeply about the correct solution. Doing so also helps the uses to better distinguish wherein correct and incorrect solutions differ (Rohbanfard & Proteau, 2011). A related, theoretical argument is that the mixture of information helps the user to create a standard or reference point for evaluating task performance (Bandura, 1986). That is, the user is in a better position to monitor and assess an ongoing task performance. Furthermore, it has been argued that the mixture of correct and incorrect solution methods better prepares users for the tasks ahead, thus reducing the possibility that they will select the wrong solution method in future (Cattaneo & Boldrini, 2017). Finally, Dror (2011) has argued that when GET instructions revolve around errors made by other people, users are more open and accepting of errors than when they commit the errors themselves. According to misattribution theory, people are more likely to perceive errors of other people than their own (Pronin, Lin, & Ross, 2002).

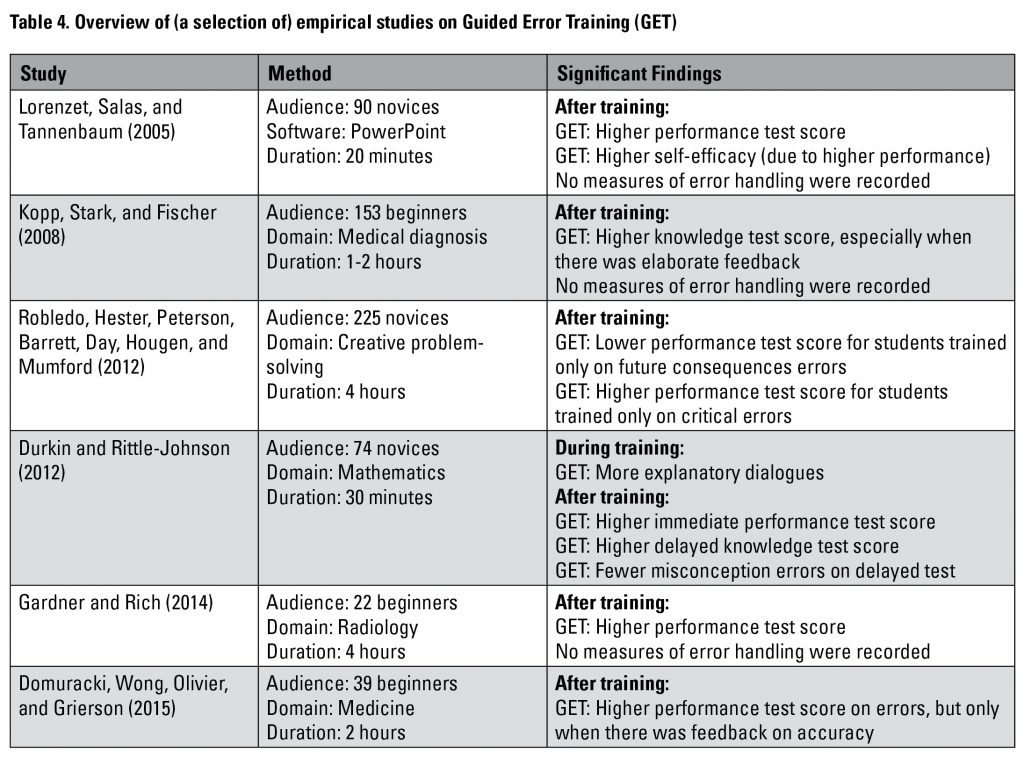

GET has been applied to the accomplishment of learning objectives on diverse issues such as motor skills development, healthcare decision-making, clinical skills learning, software training, and probability problem-solving. Table 4 illustrates a few of the studies. It should be noted that two of these studies state that they fall within EMT (i.e., Gardner & Rich, 2014; Robledo et al., 2012) but are here presented as GET because of their design. For instance, the participants in the study by Robledo were instructed on four aspects of error handling (future consequences, social consequences, controllability, and criticality) by means of a description, illustration of applications, and a test with feedback.

All in all, research shows that documentation and training become more effective when they include a mixture of correct and incorrect solutions. The benefits from presenting a mixture of solutions come at little extra cost, if any. The errors that are discussed in GET designs tend to originate from misconceptions or involve common mistakes. To optimize their effectiveness, erroneous examples should be accompanied by expert commentary, just as in

regular worked examples.

Takeaways of Guided Error Training (GET)

- The primary aim of GET is to enhance the user’s knowledge.

- GET can help the user in: developing deep-seated domain knowledge, acquiring a standard or criterion of task performance, and preparing for future tasks (including error prevention).

- GET studies often do not distinctly target emotional states arising from error handling.

CONCLUSION

There are important benefits to be gained in changing from an error-avoidant to an error-inclusive approach in documentation and training. By and large, research reveals that an error-inclusive approach helps users to: (a) build a schema or mental model that facilitates transfer and prevents them from making certain mistakes, (b) speed up error detection and reduces the risk of error entanglement, (c) increase their capacity for making a correct error diagnosis, (d) improve their chances of error recovery, and (e) reduce the negative impact of errors on their mood state and motivation (e.g., Adams et al., 2014; Kopp, Stark, Kühne-Eversmann, & Fischer, 2009; Lazonder & van der Meij, 1995; Lorenzet, Salas, & Tannenbaum, 2005; Muller, Bewes, Sharma, & Reimann, 2008).

Acknowledgement

We would like to thank the reviewers for their helpful comments.

Note: The studies shown in the Tables can have more variety in set-up and reported measures than mentioned. Only statistically significant findings are reported; also, there is a concentration on main outcomes.

REFERENCES

Adams, D. M., McLaren, B. M., Durkin, K., Mayer, R. E., Rittle-Johnson, B., Isotani, S., & van Velsen, M. (2014). Using erroneous examples to improve mathematics learning with a web-based tutoring system. Computers in Human Behavior, 36, 401–411. doi:10.1016/j.chb.2014.03.053

Atkinson, R. K., Derry, S. J., Renkl, A., & Wortham, D. (2000). Learning from examples: Instructional principles from the worked examples research. Review of Educational Research, 70(2), 181–214. doi:10.3102/00346543070002181

Bandura, A. (1986). Social foundations of thought and actions: A social cognitive theory. Englewood Cliffs, NJ: Prentice Hall.

Bannert, M. (2000). The effects of training-wheels and self-learning materials in software training. Journal of Computer Assisted Learning, 16, 336–346.

Boatman, A. (2018). Simplified user interface: The beginner’s guide. Retrieved from https://www.techsmith.com/blog/simplified-user-interface/

Bruder, C., Blessing, L., & Wandke, H. (2014). Adaptive training interfaces for less-experienced, elderly users of electronic devices. Behaviour & Information Technology, 33(1), 4–15. doi:10.1080/0144929X.2013.833649

Carroll, J. M. (1990). The Nurnberg Funnel. Designing minimalist instruction for practical computer skill. Cambridge, MA: MIT Press.

Carroll, J. M., & Carrithers, C. (1984). Training wheels in a user interface. Communications of the ACM, 27, 800–806.

Catrambone, R., & Carroll, J. M. (1987, April 5–9). Learning a word processor system with guided exploration and training wheels. Paper presented at the CHI + GI’87. Human factors in computing systems and graphics interface, Toronto.

Cattaneo, A. A. P., & Boldrini, E. (2017). You learn by your mistakes. Effective training strategies based on the analysis of video-recorded worked-out examples. Vocations and Learning, 10(1), 1–26. doi:DOI 10.1007/s12186-016-9157-4

Chillarege, K. A., Nordstrom, C. R., & Williams, K. B. (2003). Learning from our mistakes: Error management training for mature learners. Journal of Business and Psychology, 77(3), 369–385.

Domuracki, K., Wong, A., Olivieri, L., & Grierson, L. E. M. (2015). The impacts of observing flawed and flawless demonstrations on clinical skill learning. Medical Education, 49, 186–192. doi:10.1111/medu.12631

Dormann, T. J., & Frese, M. (1994). Error training: Replication and the function of exploratory behavior. International Journal of Human-Computer Interaction, 6(4), 365–372.

Dror, I. (2011). A novel approach to minimize error in the medical domain: Cognitive neuroscientific insights into training. Medical Teacher, 33, 34–38. doi:10.3109/0142159X.2011.535047

Durkin, K., & Rittle-Johnson, B. (2012). The effectiveness of using incorrect examples to support learning about decimal magnitude. Learning and Instruction, 22, 206–214. doi:10.1016/j.learninstruc.2011.11.001

Farkas, D. K. (2010). The diagnosis-resolution structure in troubleshooting procedures. Paper presented at the IEEE International Professional Communication Conference, Enschede, the Netherlands.

Farkas, D. K. (2011, January 27). Dissecting troubleshooting procedures. Paper presented at the ISTC, London, UK.

Frese, M., Brodbeck, F., Heinbokel, T., Mooser, C., Schleiffenbaum, E., & Thiemann, P. (1991). Errors in training computer skills: On the positive function of errors. Human-Computer Interaction, 6, 77–93.

Gardner, A., & Rich, M. (2014). Error management training and simulation education. The Clinical Teacher, 11, 537–540.

Grobe, C. S., & Renkl, A. (2007). Finding and fixing errors in worked examples: Can this foster learning outcomes? Learning and Instruction, 17(6), 612–634. doi:10.1016/j.learninstruc.2007.09.008

Heemsoth, T., & Heinze, A. (2014). The impact of incorrect examples on learning fractions: A field experiment with 6th grade students. Instructional Science, 42(4), 639–657. doi:10.1007/s11251-013-9302-5

Heimbeck, D., Frese, M., Sonnentag, S., & Keith, N. (2003). Integrating errors into the training process: The function of error management instructions and the role of goal orientation. Personnel Psychology, 56, 333–361.

Katz-Navon, T., Naveh, E., & Stern, Z. (2009). Active learning: When is more better? The case of resident physicians’ medical errors. Journal of Applied Psychology, 94, 1200–1209. doi:10.1037/a0015979

Keith, N., & Frese, M. (2005). Self-regulation in error management training: Emotion control and metacognition as mediators of performance effects. Journal of Applied Psychology, 90, 677–691. doi:10.1037/0021-9010.90.4.677

Keith, N., & Frese, M. (2008). Effectiveness of error management training: A meta-analysis. Journal of Applied Psychology, 93, 59–69. doi:10.1037/0021-9010.93.1.59

Kopp, V., Stark, R., & Fischer, M. R. (2008). Fostering diagnostic knowledge through computer-supported, case-based worked examples: Effects of erroneous examples and feedback. Medical Education, 42, 823–829.

Kopp, V., Stark, R., Kühne-Eversmann, L., & Fischer, M. R. (2009). Do worked examples foster medical students’ diagnostic knowledge of hyperthyroidism? Medical Education, 43, 1210–1217.

Lazonder, A. W. (1994). Minimalist computer documentation. A study on constructive and corrective skills development. (Doctoral thesis). University of Twente, Enschede, the Netherlands.

Lazonder, A. W., & van der Meij, H. (1994). Effect of error-information in tutorial documentation. Interacting with Computers, 6(1), 23–40. doi:10.1016/0953-5438(94)90003-5

Lazonder, A. W., & van der Meij, H. (1995). Error-information in tutorial documentation: Supporting users’ errors to facilitate initial skill learning. International Journal of Human Computer Studies, 42, 185–206.

Leutner, D. (2000). Double-fading support: A training approach to complex software systems. Journal of Computer Assisted Learning, 16, 347–357.

Lorenzet, S. J., Salas, E., & Tannenbaum, S. I. (2005). Benefiting from mistakes: The impact of guided errors on learning, performance, and self-efficacy. Human Resource Development Quarterly, 16(3), 301–322.

MadCap. (2018). MadCap Contributor 8. Contribution workflow. Retrieved from http://docs.madcapsoftware.com/ContributorV8/ContributorContributionWorkflowGuide.pdf

McLaren, B. M., van Gog, T., Ganoe, C., & Karabinos, M. (2016). The efficiency of worked examples compared to erroneous examples, tutored problem solving, and problem solving in computer-based learning environments. Computers in Human Behavior, 55, 87–99. doi:10.1016/j.chb.2015.08.038

Muller, D. A., Bewes, J., Sharma, M. D., & Reimann, P. (2008). Saying the wrong thing: Improving learning with multimedia by including misconceptions. Journal of Computer Assisted Learning, 24, 144–155. doi:10.1111/j.1365-2729.2007.00248.x

Nordstrom, C. R., Wendland, D., & Williams, K. B. (1998). “To err is human”: An examination of the effectiveness of error management training. Journal of Business and Psychology, 12(3), 269–282.

Pratt, J. A. (2000). Instruction in microbursts: The study of minimalist principles applied to online help. (Doctoral dissertation). Utah State University,

Pronin, E., Lin, D. Y., & Ross, L. (2002). The bias blind spot: Perceptions of bias in self versus others. Personality and Social Psychology Bulletin, 28(3), 369–381.

Renkl, A. (2014). The worked examples principle in multimedia learning. In R. E. Mayer (Ed.), The Cambridge handbook of multimedia learning (2nd ed., pp. 391–412). New York, NY: Cambridge University Press.

Robledo, I. C., Hester, K. S., Peterson, D. R., Barrett, J. D., Day, E. A., Hougen, D. P., & Mumford, M. D. (2012). Errors and understanding: The effects of error-management training on creative problem-solving. Creativity Research Journal, 24(2–3), 220–234. doi:10.1080/10400419.2012.677352

Rohbanfard, H., & Proteau, L. (2011). Learning through observation: A combination of expert and novice models favors learning. Experimental Brain Research, 215, 183–197. doi:10.1007/s00221-011-2882-x

Samsung. (2015). SM-G920W8 User manual. Retrieved from: http://downloadcenter.samsung.com/content/EM/201607/20160706032431715/SM-G920W8_UG_CA_E5a.pdf

Spannagel, C., Girwidz, R., Lőthe, H., Zendler, A., & Schroeder, U. (2008). Animated demonstrations and training wheels interfaces in a complex learning environment. Interacting with Computers, 20, 97–111.

Steele-Johnson, D., & Kalinoski, Z. T. (2014). Error framing effects on performance: Cognitive, motivational, and affective pathways. The Journal of Psychology, 148(1), 93–111.

Struve, D., & Wandke, H. (2009). Video modeling for training older adults to use new technologies. ACM Transactions on Accessible Computing, 2(1), 4.1–4.24. doi:10.1145/1525840.1525844

van der Meij, H. (1996). Does the manual help? An examination of the problem solving support offered by manuals. IEEE Transactions on Professional Communication, 39, 146–156.

van der Meij, H. (2007). Goal-orientation, goal-setting and goal-driven behavior in (minimalist) user instructions. IEEE Transactions on Professional Communication, 50, 295–305.

van der Meij, H., Blijleven, P., & Jansen, L. (2003). What makes up a procedure? In M. J. Albers & B. Mazur (Eds.), Content & complexity. Information design in technical communication (pp. 129–186). Mahwah, NJ: Erlbaum.

van der Meij, H., & Carroll, J. M. (1998). Principles and heuristics for designing minimalist instruction. In J. M. Carroll (Ed.), Minimalism beyond the Nurnberg Funnel (pp. 19–53). Cambridge, MA: MIT Press.

van der Meij, H., & Gellevij, M. R. M. (2004). The four components of a procedure. IEEE Transactions on Professional Communication, 47, 5–14. doi:10.1109/TPC.2004.824292

van der Meij, H., Karreman, J., & Steehouder, M. (2009). Three decades of research and professional practice on software tutorials for novices. Technical Communication, 56, 265–292.

van Gog, T. (2015). Learning from erroneous examples in medical education. Medical Education, 49, 138-146. doi:10.1111/medu.12655

van Merriënboer, J. J. G., & Kester, L. (2014). The four-component instructional design model: Multimeda principles in environments for complex learning. In R. E. Mayer (Ed.), The Cambridge handbook of multimedia learning (2nd ed., pp. 104–148). New York, NY: Cambridge University Press.

van Merriënboer, J. J. G., Kirschner, P. A., & Kester, L. (2003). Taking the load off a learner’s mind: Instructional design for complex learning. Educational Psychologist, 38(1), 5–13. doi:10.1207/S15326985EP3801_2

Wood, R. E., Kakebeeke, B. M., Debowski, S., & Frese, M. (2000). The impact of enactive exploration on intrinsic motivation, strategy and performance in electronic search. Applied Psychology: An International Review, 49(2), 263–283.

ABOUT THE AUTHORS

Hans van der Meij is a Senior Researcher and Lecturer in Instructional Design & Technology at the University of Twente in the Netherlands. His research interests are technical documentation (e.g., minimalism, and instructional video) and the functional integration of ICT in education. He has received several awards for his articles, including an IEEE “Landmark Paper” award for a publication on minimalism (with John Carroll). He is available at H.vanderMeij@utwente.nl.

Marie-Louise Flacke was a Senior Technical Communicator with experience in various European organizations such as Deutsche Boerse (Frankfurt/Main, DE), EU Council (Brussels, BE), Nortel Networks (Hamburg, DE), and Roche Diagnostics (Rotkreuz, CH). Focusing on usability and minimalism, she also set up on-site and on-line courses in technical communication (University Rennes 2, FR & Université Catholique de l’Ouest, Angers, FR).